DWDM and CPO/NPO now sit at the heart of AI infrastructure design. As training clusters grow and inference traffic rises, the network no longer plays a supporting role. Instead, it defines cluster efficiency, power consumption, latency, and long-term scalability. In the AI era, faster chips alone are not enough. A stronger interconnect fabric is now essential.

At the same time, operators face a more complex challenge. They must increase bandwidth, control heat, reduce power, and keep systems maintainable. Therefore, the industry is moving toward a more layered optical architecture. In that shift, DWDM and CPO/NPO have become a highly practical combination. Together, they support both dense short-reach interconnects and high-capacity transport across larger network domains.

Why AI Clusters Need a New Interconnect Model

AI traffic behaves very differently from traditional cloud traffic. In older data centers, north-south flows often dominated. However, AI clusters generate massive east-west traffic between accelerators, memory pools, storage systems, and switching layers. As a result, the network directly affects job completion time and resource utilization.

Moreover, the pressure grows at every layer. Higher speeds increase signal loss. Greater density raises thermal stress. Larger clusters also create more complex cabling and expansion challenges. Because of this, legacy copper links and conventional pluggable optics face mounting limits. They still matter, but they no longer solve the full problem alone.

For that reason, the market needs a new architecture. It must reduce electrical bottlenecks near the chip. It must also scale bandwidth across halls, campuses, and metro connections. This is where DWDM and CPO/NPO begin to show their true strategic value.

The Distinct Roles of DWDM, CPO, and NPO

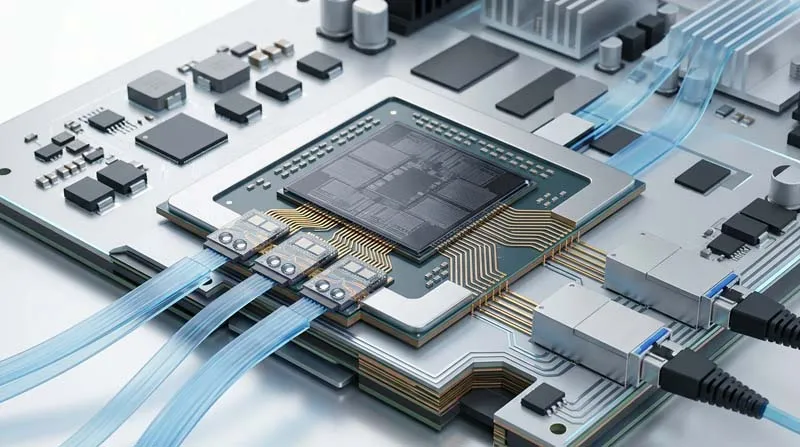

To understand the opportunity clearly, we must separate the roles of these technologies. CPO, or co-packaged optics, places optical engines very close to the switching ASIC. This approach shortens electrical traces, improves signal integrity, and lowers system power at very high speeds. In principle, CPO offers a powerful path toward extreme bandwidth density.

NPO, or near-package optics, takes a more balanced route. It moves optics near the package, but not as deeply into the package ecosystem as CPO. Therefore, NPO still cuts electrical path length and supports high-speed performance. However, it also preserves more flexibility in manufacturing, testing, replacement, and field maintenance.

DWDM works at a different scale. It does not replace CPO or NPO. Instead, it increases transport capacity by sending multiple wavelengths across the same fiber pair. As a result, DWDM supports high-capacity connections across rooms, campuses, metros, and regional sites.

In simple terms, CPO and NPO optimize short-reach optical integration close to compute and switching resources. DWDM expands the transport backbone that connects those resources into a larger AI network. That is why DWDM and CPO/NPO should be seen as complementary technologies rather than competing choices.

Why NPO Looks More Practical in 2026 and 2027

CPO has strong long-term appeal. Its performance ceiling is high, and its role in future AI systems is clear. However, real deployment depends on more than technical ambition. It also depends on packaging yield, thermal control, testing workflows, serviceability, and operating risk.

This is where NPO stands out. First, NPO delivers real gains in power efficiency and signal performance because it shortens the electrical path. Second, it avoids some of the deeper packaging and maintenance challenges that come with full co-packaging. As a result, system vendors and operators can adopt it more easily within current engineering models.

Moreover, many AI builders are not asking for the most radical design tomorrow. Instead, they want a design they can deploy, scale, and service over the next two years. Therefore, DWDM and CPO/NPO become especially important in the 2026–2027 window. NPO offers a realistic near-term upgrade path, while DWDM supports the wider network expansion that large AI systems demand.

Why Rack-Level Optimization Is Not Enough

A common planning mistake is to focus only on the board or only on the module. That view is too narrow for modern AI infrastructure. Once clusters grow from racks to pods, and from pods to campuses, the transport layer becomes just as important as the switch layer.

This is why DWDM and CPO/NPO form a meaningful architectural bridge. NPO or CPO can improve density and efficiency near the switch. Yet traffic must still move across buildings and between data centers. At that point, the system needs a transport layer with high capacity, better fiber efficiency, and cleaner scale economics. DWDM provides exactly that capability.

Consequently, AI network design can no longer rely on isolated upgrades. A faster local interconnect helps, but it does not solve campus-scale or regional-scale growth by itself. In contrast, a coordinated optical stack creates continuity from short reach to long reach. That continuity matters because AI capacity rarely stays fixed for long.

DWDM and CPO/NPO Enable a More Coherent AI Fabric

The strongest case for DWDM and CPO/NPO is not only performance. It is architectural coherence. AI operators need a fabric that evolves smoothly across different distances and deployment stages. A fragmented approach may remove one bottleneck while creating another. That leads to higher cost, slower expansion, and more operational friction.

By contrast, a coherent path aligns optical integration near the package with scalable transport across the broader network. Therefore, operators can improve power and bandwidth density at the edge of the switch while preparing for growth across campus and metro environments.

In addition, this approach improves investment logic. Teams can adopt NPO where serviceability matters today. They can continue using advanced pluggable optics where that model still fits. Meanwhile, they can expand network capacity with DWDM as cluster footprints grow. This is more resilient than forcing one optical model into every layer from day one.

A Practical Upgrade Path for AI Infrastructure Builders

For most builders, the best strategy is phased evolution. That is another reason why DWDM and CPO/NPO fit the market so well.

In the first phase, operators can adopt NPO to reduce power pressure and improve bandwidth density around switching systems. This step brings meaningful performance gains without introducing the full packaging complexity of CPO. In the second phase, they can strengthen the transport backbone with DWDM to connect larger AI domains across data halls, campuses, and metro sites. In the third phase, they can move toward deeper CPO adoption once the supply chain, thermal design, and service ecosystem become more mature.

This path is practical because it respects both physics and operations. It does not dismiss the promise of CPO. However, it also does not force the market to absorb packaging risk before deployment models are ready. Therefore, DWDM and CPO/NPO provide a disciplined roadmap rather than a single-point solution.

Why This Shift Matters for Industry Competition

The next stage of AI competition will not be won by compute density alone. It will be won by systems that connect compute efficiently, expand cleanly, and remain maintainable under real operating conditions. For that reason, DWDM and CPO/NPO should be understood as a strategic framework, not merely as component-level trends.

For equipment vendors, this raises the standard. Success now depends on coordination across silicon, optics, packaging, transport, and operations. For cloud providers and AI infrastructure owners, the key metrics also change. Port speed still matters, but energy per bit, thermal stability, service efficiency, and future expansion matter even more.

As a result, the winners in this market will likely be the companies that can combine performance with deployment realism. That balance is exactly what makes DWDM and CPO/NPO so important today.

From Technology Direction to Real-World Deployment

As the market moves from concept to execution, experienced optical solution providers become more valuable. In this context, HTF offers a relevant example. HTF is a professional supplier of fiber optic products, WDM system solutions, and large-scale data transmission solutions. Its team brings more than ten years of experience in optical communication product development, fiber solution design, component engineering, and manufacturing.

HTF focuses on helping customers build, connect, and optimize optical infrastructure for global data centers, 5G networks, cloud computing, metro networks, and access networks. In addition, the HT6000 compact OTN optical transport platform uses a CWDM/DWDM universal architecture. It supports transparent multi-service transmission, flexible networking, and scalable access. It also meets the demand for high-capacity nodes above 1.6T. For IDC and ISP operators, such a platform offers a practical foundation for WDM transport expansion in the AI era.

Conclusion

DWDM and CPO/NPO are not separate stories. Together, they define a pragmatic upgrade path for AI compute networks. NPO offers a realistic bridge between legacy pluggable models and deeper optical integration. CPO points toward a more advanced future. Meanwhile, DWDM provides the transport backbone that turns isolated compute clusters into scalable AI infrastructure.

Therefore, the most effective strategy is not to chase one technology in isolation. Instead, it is to align package-level optical evolution with network-level transmission capability. In the years ahead, those who deploy DWDM and CPO/NPO as a coordinated architecture will be far better positioned to build AI networks that are faster, cleaner, and ready to scale.