Why This Shift Matters Now

AI-driven DWDM Network architecture is becoming a core requirement for modern optical infrastructure. AI training clusters, inference platforms, and cross-region data replication now generate bursty traffic, not smooth traffic growth. As a result, many operators and data center teams face a new challenge: they must deliver higher bandwidth, lower latency, and stronger resilience at the same time.

In the past, DWDM operations relied on static thresholds, manual tuning, and reactive troubleshooting. That model worked when traffic patterns were predictable. However, AI workloads create traffic tides that rise quickly and shift across time windows. Therefore, optical transport networks need faster sensing, faster decisions, and safer automated actions.

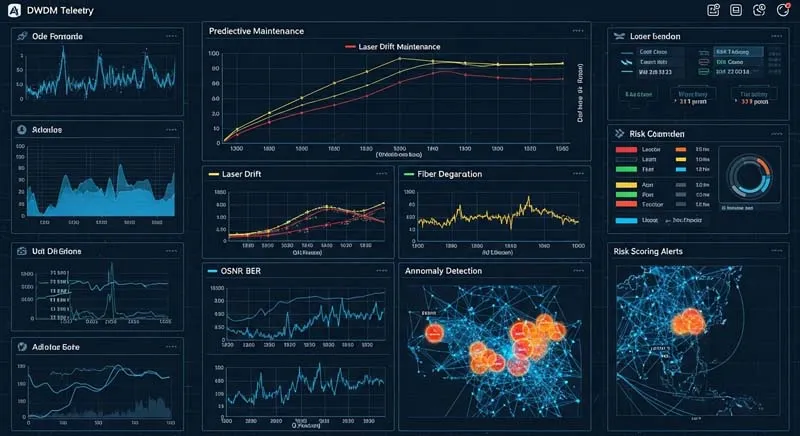

An AI-driven DWDM Network addresses this gap by combining real-time optical telemetry with machine learning. It does not only show alarms. Instead, it detects weak signals early, predicts degradation trends, and recommends or executes optimization actions within defined safety limits. Consequently, the network moves from manual operation to guided autonomy.

From Traditional DWDM Operations to Autonomous Optical Networks

Traditional DWDM networks are powerful, but their operational model often creates delays. Engineers usually respond after alarms fire. Then they inspect multiple layers, compare logs, check power levels, and tune parameters step by step. In complex multi-vendor environments, this process can take too long.

By contrast, an AI-driven DWDM Network follows a closed-loop logic: sense, analyze, predict, decide, execute, and verify. First, the system collects optical performance data continuously. Next, machine learning models identify abnormal patterns. Then the control plane or operations team applies an optimization strategy. Finally, the platform checks the result and refines future decisions.

This shift matters because AI-era traffic does not wait for manual cycles. Moreover, service-level commitments for IDC interconnect, cloud backbones, and metro core links require shorter recovery windows. If the network can predict risk before service impact, teams can schedule maintenance during safe windows and avoid emergency response.

Why DWDM Is a Strong Fit for AI-Based Operations

DWDM networks offer a rich data foundation. Optical systems generate high-value telemetry such as OSNR, BER, Q-factor, Tx/Rx power, laser temperature, bias current, amplifier gain, and alarm events. In addition, these metrics form time-series data, which machine learning models can analyze effectively.

That is why an AI-driven DWDM Network is not a superficial “AI layer” added on top of legacy tools. Instead, it builds on measurable physical behavior. Laser drift, amplifier shifts, connector contamination, and fiber attenuation changes often leave early traces in the telemetry. Therefore, the system can identify risk trends before a hard failure occurs.

Another reason is control flexibility. Modern optical networks support gain adjustments, power balancing, channel-level tuning, and route coordination. However, these variables interact with each other. Manual tuning can solve one issue while creating another. In contrast, a model-driven approach can evaluate multiple signals together and propose safer parameter combinations.

Real-Time Optical Performance Analytics Improves Observability

A major benefit of an AI-driven DWDM Network is better observability. Traditional threshold alarms are useful, but they can miss trend-based risk. They can also trigger alarm storms when one fault cascades across multiple channels. As a result, engineers spend time filtering noise instead of fixing root causes.

Machine learning changes this workflow. For example, anomaly detection models can compare current optical behavior against historical baselines for the same channel, span, or node. Meanwhile, correlation models can link power fluctuations, BER movement, and temperature drift in one analysis chain. Therefore, teams gain a clearer picture of fault formation, not just fault visibility.

This matters in production. A small OSNR decline may look harmless in isolation. However, when it appears with amplifier gain drift and repeated transient alarms, it can signal a serious issue. An AI-driven DWDM Network can flag that pattern early and assign a risk score. Consequently, operations teams can prioritize actions based on likely impact, not only on alarm severity labels.

In addition, real-time analytics supports health profiling. The platform can maintain channel-level, link-level, and node-level health scores. These scores help teams compare sites, identify weak segments, and plan upgrades with stronger evidence.

Predictive Maintenance Reduces MTTR and Protects SLA

Predictive maintenance is one of the strongest business cases for an AI-driven DWDM Network. Many optical failures do not appear suddenly. Laser wavelength drift, power drift, connector loss growth, and fiber aging usually develop over time. Therefore, prediction models can detect precursors and issue early warnings.

A practical model combines time-series trend analysis with fault history. First, the model learns normal behavior ranges for each optical component or link segment. Next, it measures deviation speed, deviation persistence, and cross-metric relationships. Then it produces a graded warning, such as advisory, warning, or high-risk. As a result, engineers can align the response to actual urgency.

This approach changes operations from reactive repair to planned prevention. Instead of waiting for service impact, teams can replace components, clean connectors, rebalance power, or shift traffic during a maintenance window. Consequently, fault localization becomes faster and recovery paths become shorter.

In many scenarios, this method can cut MTTR by up to 70%. That number matters because lower MTTR means more than faster repair. It also means stronger SLA performance, lower emergency labor cost, and better customer trust. For cloud interconnect and carrier backbone services, these gains directly support revenue protection.

Dynamic Gain and Power Optimization for AI Traffic Tides

AI workloads create “traffic tides.” Training jobs can start in batches. Inference loads can spike by region or by time. Data synchronization can peak overnight. Therefore, static optical settings often leave performance on the table.

An AI-driven DWDM Network can respond to this behavior through dynamic optimization. It continuously evaluates link state, channel margins, and traffic conditions. Then it adjusts amplifier gain, power balance, and channel allocation within safe limits. As a result, the network maintains stability while improving resource efficiency.

For example, channel power balancing can reduce edge-channel degradation in dense systems. Likewise, gain tuning can protect signal quality when traffic shifts increase load on specific paths. Moreover, the platform can coordinate with upper-layer routing or service orchestration to support cross-layer optimization.

This capability must remain disciplined. However, a well-designed AI-driven DWDM Network uses guardrails, rollback logic, and staged deployment. Therefore, it can avoid optimization oscillation and preserve network safety. In practice, the best systems support audit logs, explainable policy actions, and human override when needed.

A Practical Path to Autonomous Optical Networks

Organizations do not need to jump from manual operations to full autonomy in one step. Instead, most teams should follow a phased roadmap.

Phase 1: AI-Assisted Monitoring

At this stage, the platform improves visibility and anomaly detection. It supports engineers with smarter alerts and health scoring. However, humans still make all operational decisions.

Phase 2: AI-Assisted Decision Making

Next, the platform adds prediction and optimization recommendations. It suggests actions such as power rebalancing, component checks, or maintenance scheduling. Engineers review and approve these actions before execution.

Phase 3: Policy-Constrained Closed-Loop Automation

Finally, selected actions run automatically under strict policies. The system executes low-risk optimizations, validates outcomes, and records all changes. Meanwhile, teams retain manual takeover rights for complex events.

This phased model helps teams build trust and improve data quality. In addition, it reduces operational risk during adoption. Therefore, autonomous optical networking becomes an engineering program, not a marketing slogan.

Implementation Priorities That Improve Results

A successful AI-driven DWDM Network depends on more than algorithms. First, data quality must improve. Teams need consistent telemetry formats, time synchronization, and reliable event labels. Without this foundation, model outputs lose credibility.

Second, workflows must connect to operations systems. Predictions only create value when they trigger action. Therefore, alerts and recommendations should integrate with NMS/OSS, tickets, maintenance planning, and spare-part processes.

Third, governance must stay strong. Because optical transport supports critical services, automation needs policy boundaries, auditability, and rollback options. Moreover, teams should track metrics such as MTTR, false positives, early warning lead time, and optimization success rate.

Conclusion: Building the Optical Foundation for AI Growth

The next generation of optical transport will not compete only on hardware capacity. Instead, it will compete on sensing depth, prediction accuracy, and optimization speed. That is why the AI-driven DWDM Network is becoming a strategic foundation for carriers, cloud operators, and data center interconnect planners.

As AI traffic expands, networks must adapt in real time while protecting stability. Therefore, organizations that invest in observability, predictive maintenance, and dynamic optical control will gain a clear operational advantage. In short, an AI-driven DWDM Network moves DWDM from fast transmission infrastructure to autonomous, resilient, and business-aware optical transport.